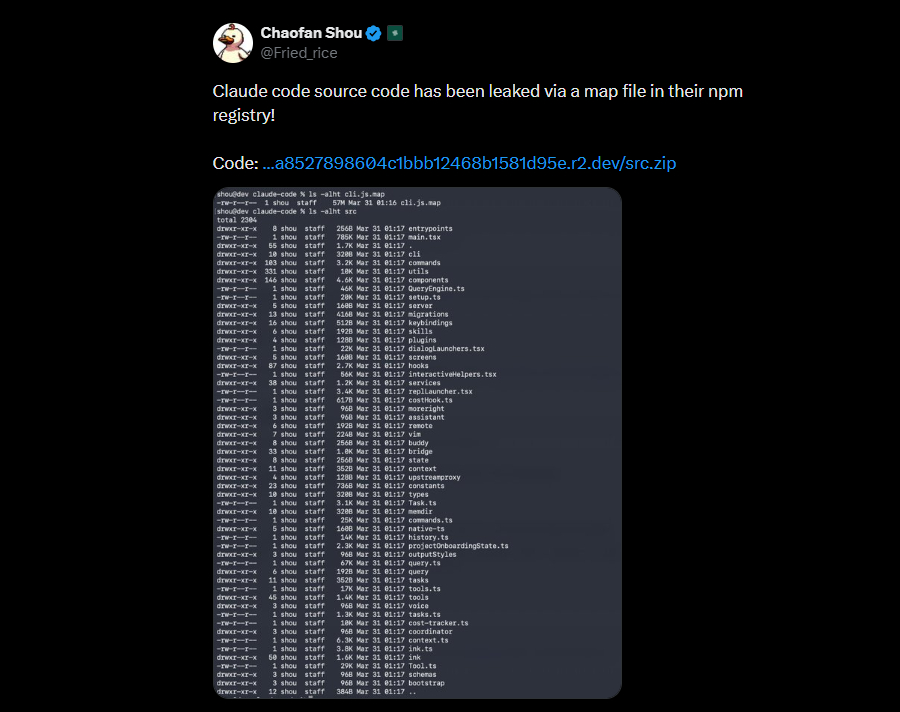

In late March 2026, Anthropic exposed the internal codebase of its Claude Code agent due to an npm packaging error. The exposure window was short, but the impact was significant. The leak included more than 500,000 lines of TypeScript code across nearly 2,000 internal files. It revealed core components such as orchestration logic, tool execution layers, and prompt handling systems. The affected package was published on the public npm registry. A misconfigured build included a .map file, which pointed to a cloud-hosted archive. This archive, reportedly hosted on services like Cloudflare R2, contained the full source code.

This was not a traditional breach. There was no exploit chain, credential theft, or infrastructure compromise. Instead, a build artifact created a direct path to sensitive internals. The exposure lasted only a few hours before removal, but that was enough. Developers and researchers quickly mirrored and shared the code across platforms. As a result, it continued to circulate after the takedown. The cybersecurity community first identified the issue, with platforms like The Hacker News reporting it early. Independent researchers traced the source map linkage, while GitHub mirrors preserved the code. This incident highlights a critical reality. Small CI/CD errors can expose large-scale AI systems. It also offers rare insight into AI agent architecture, system design, and emerging security risks.

The Root Cause: Source Maps as an Attack Surface

At a technical level, the failure originated in the packaging layer of the CI/CD pipeline.

During the build process, the system generated .map files. These files map minified JavaScript back to the original TypeScript source, allowing debugging in production environments. This is standard practice.

The issue was that:

- The

.mapfile was included in the published npm package - It contained references to original source paths

- Those paths pointed to a publicly accessible archive hosted on cloud object storage

Effectively, the source map acted as an index of the entire private codebase.

A simplified representation of the failure chain looks like this:

TypeScript Source

↓ (compiled + minified)

JavaScript Bundle + Source Map (.map)

↓ (packaging failure)

npm Publish (includes .map)

↓

.map → Cloud Storage URL → Full Source Archive

The absence or misconfiguration of .npmignore or equivalent packaging controls allowed debug artifacts to move from internal build systems into public distribution.

From a security standpoint, this is critical. Source maps are often treated as low-risk, but in complex systems they can expose:

- File structures

- Internal module names

- API contracts

- Hidden feature flags

- Direct links to source repositories or storage

What the Leak Revealed: Inside an AI Agent Runtime

The exposed codebase provides a rare look into how modern AI coding agents are engineered.

1. Multi-Agent Orchestration Layer

Claude Code appears to use a task orchestration model, not a single linear inference loop.

Key characteristics include:

- Dynamic spawning of sub-agents for parallel tasks

- Delegation of responsibilities (e.g., code generation, validation, execution)

- Coordination through a central controller

Conceptually:

User Prompt

↓

Controller Agent

↓

┌───────────────┬───────────────┬───────────────┐

│ Code Agent │ Review Agent │ Tool Agent │

└───────────────┴───────────────┴───────────────┘

↓

Aggregated Output

This architecture allows decomposition of complex tasks, but it also increases attack surface, especially when agents share context or tools.

2. Tool Execution Framework

One of the most critical components exposed is the tool invocation layer.

The agent is not limited to text generation. It can:

- Execute shell commands (bash)

- Read and write files

- Interact with development environments

- Call external APIs

This is typically implemented through structured tool definitions such as:

{

"tool": "bash.execute",

"args": {

"command": "npm install"

}

}

From a security perspective, this creates a boundary problem. If prompt injection can influence tool selection or arguments, the model can be coerced into executing unintended operations.

3. Context Management Pipeline

A particularly sensitive area revealed is how the system manages long-running context.

Instead of passing full conversation history, the system appears to use a multi-stage pipeline:

- Collection

Raw interaction data and intermediate outputs - Compression

Summarization and pruning to fit token limits - Persistence

Storage of compressed state for reuse - Rehydration

Reconstruction of context for future steps

In abstract form:

Full Context → Compression → Stored Summary → Rehydration → Active Context

This introduces a non-obvious risk.

If an attacker can craft input that survives compression, for example by embedding instructions in ways that appear semantically important, those instructions can persist across multiple cycles. This creates what can be described as context-level persistence attacks.

4. Prompt Handling and Guardrails

The codebase also exposes how prompts are constructed internally.

Typical flow:

System Prompt

+ Tool Definitions

+ User Input

+ Memory Context

= Final Prompt Sent to Model

Guardrails are applied at multiple levels:

- Pre-processing filters

- Tool validation layers

- Post-response sanitization

However, once these layers are visible, attackers can identify:

- Where validation is weak or bypassable

- How instructions are merged

- Which tokens or patterns influence tool execution

This shifts attacks from trial-and-error to targeted manipulation.

5. Persistent Agent Modes and Background Execution

The leak suggests early implementations of persistent agent behavior:

- Background task execution loops

- Event-driven triggers

- Scheduled operations

Conceptually similar to:

while (true) {

checkForTasks();

execute();

updateMemory();

}

This is a significant shift from request-response AI toward continuous execution systems. It also raises questions about:

- Long-term state integrity

- Unauthorized task persistence

- Monitoring and auditability

Security Implications: From Prompt Injection to System Exploitation

Before this incident, prompt injection was largely treated as a UI-layer issue. The leak shows it is a system-level risk.

With visibility into internals, attackers can:

- Target specific stages of context compression

- Craft inputs that influence tool invocation logic

- Exploit assumptions in agent coordination

- Trigger unintended command execution

The presence of tool execution capabilities means this is not theoretical. If improperly controlled, it can lead to:

- File exfiltration

- Arbitrary command execution

- Environment manipulation

This effectively turns an LLM from a passive system into an active attack vector.

Supply Chain Expansion: npm as a Secondary Attack Surface

The incident also triggered activity in the npm ecosystem.

Once the code became accessible, attackers moved quickly to:

- Publish typosquatted packages

- Introduce malicious dependencies

- Target developers attempting to run or analyze the leaked code

This is a classic dependency confusion and ecosystem poisoning pattern, but accelerated by the visibility of a high-value codebase.

The risk extends beyond the original vendor to:

- Developers experimenting with the code

- Organizations integrating similar architectures

- Downstream environments pulling unverified packages

The Technical Failure Behind the Leak

At the center of the incident was a common but often underestimated artifact: a JavaScript source map file. Source maps are designed to help developers debug minified code by mapping it back to the original source. In this case, a .map file was accidentally included in a public npm package. That file pointed to a cloud-hosted archive containing the full TypeScript codebase, reportedly hundreds of thousands of lines spread across thousands of files.

What should have remained an internal debugging aid became a public index to the entire system. This type of failure sits squarely in the CI/CD pipeline. The build process generated the source map as expected. The packaging process failed to exclude it. The publishing process pushed it live. Each step worked in isolation, but the system as a whole failed.

For executive teams, this is the key takeaway: modern risk does not always come from sophisticated attackers. It often emerges from perfectly normal systems interacting in unintended ways.

What the Code Revealed About AI Agents

The exposed code offered a rare, unfiltered look into how advanced AI coding agents are actually built.

Claude Code is not just a chatbot with coding capabilities. It operates more like an orchestration engine. The architecture appears to combine a central reasoning loop with a set of specialized sub-agents that can execute tasks in parallel. These agents interact with tools such as file systems, shell environments, and external APIs, effectively turning the model into an execution layer rather than just a reasoning layer.

Equally important is how the system manages context. Instead of relying on a simple prompt-response cycle, it uses a multi-stage pipeline to compress, persist, and rehydrate context over time. This allows the agent to maintain continuity across longer workflows, but it also introduces a new class of risk. If an attacker understands how context is stored and summarized, they can attempt to inject instructions that survive multiple cycles.

The leak also hinted at emerging capabilities such as persistent background agents, autonomous task scheduling, and continuous reasoning loops. These are early signs that AI systems are moving away from reactive interfaces toward always-on operational roles.

From Black Box to Blueprint

Before this incident, attacking AI systems largely involved guesswork. Security researchers relied on prompt injection experiments and observed behavior to infer internal logic. That dynamic changes when source code becomes available. With direct visibility into prompt handling, tool invocation, and guardrails, attackers can move from probabilistic attacks to deterministic ones. They can identify exactly where validation occurs, how instructions are filtered, and how tools are triggered. This significantly lowers the cost of developing reliable exploits.

One particularly important implication is persistence. If malicious instructions can be embedded in a way that survives context compression, they effectively become long-lived backdoors within the agent’s reasoning process. For leadership teams, this represents a shift in how AI risk should be evaluated. The model is only one part of the system. The orchestration layer, memory handling, and tool access are equally critical attack surfaces.

The Supply Chain Ripple Effect

The exposure did not stop at source code visibility. It quickly extended into the broader npm ecosystem. During the brief window when the package was live, there were reports of malicious dependency variants and typosquatted packages appearing in parallel. This is a predictable pattern. When attackers know developers are experimenting with newly exposed code, they position themselves in the dependency chain.

In some cases, this can lead to remote access trojans being introduced through seemingly legitimate packages. The attack does not target the original system. It targets the developers trying to replicate or explore it. This is why modern supply chain security is no longer just about your code. It is about every package, every dependency, and every transient artifact that enters your environment.

Strategic Implications for Organizations

There are three structural lessons from this incident that go beyond this specific case.

First, AI systems should be treated as full-stack applications, not isolated models. Their risk profile includes orchestration logic, tool integrations, and execution environments.

Second, CI/CD pipelines are now part of the security perimeter. Artifact validation, packaging rules, and publishing controls need the same rigor as production systems.

Third, transparency changes the threat model. Once internal logic is exposed, even briefly, it accelerates both innovation and exploitation. Competitors learn faster, but so do adversaries.

How Gurucul Strengthens AI System Security

As AI systems become more autonomous and interconnected, security must evolve beyond traditional monitoring. Platforms like Gurucul provide a layered approach to securing AI infrastructure by focusing on behavior, identity, and real-time risk detection.

The Next-Gen SIEM enables centralized visibility across complex AI environments. It ingests and correlates data from multiple sources, including applications, users, APIs, and machine identities. This is critical for AI systems, where activities span across pipelines, tools, and runtime environments.

The User and Entity Behavior Analytics (UEBA) builds behavioral baselines for users, systems, and AI agents. It detects subtle deviations such as unusual prompt patterns, abnormal tool usage, or unexpected access behavior. This helps identify threats that bypass traditional rule-based detection.

The AI-powered Insider Risk Management focuses on misuse within trusted environments. In AI systems, risks often come from legitimate access being used in unintended ways. This capability helps detect data exfiltration, privilege misuse, and risky behavior across both human and machine actors.

The AI SOC Analyst acts as a force multiplier for security teams. It automates threat investigation, correlates signals across systems, and prioritizes incidents based on risk. In AI-driven environments, where activity is continuous and high-volume, this helps reduce response time and improve decision-making.

Together, these capabilities align with the new security model required for AI systems, where monitoring behavior, context, and execution flows is more important than relying only on perimeter defenses.

A Broader Industry Signal

The Claude Code leak is less about one company and more about where the industry is heading. AI agents are becoming operational systems that can act, execute, and persist. That evolution brings enormous productivity gains, but it also expands the attack surface in ways that traditional security models are not fully prepared for. A single misconfigured file revealed not just code, but an entire design philosophy for next-generation AI systems. And once that blueprint is visible, it cannot be unseen.

For organizations investing in AI, the question is no longer whether these systems are powerful. It is whether they are being built and deployed with the same discipline expected of any critical infrastructure. Because at this level of capability, a small mistake is no longer just a bug. It is a system-wide event.